Smart Segmentation Playbook

A four-layer self-audit for UK CX and insight leaders.

.png)

TL;DR

Most customer segmentations stop at one layer—the easiest one. Demographics describe who your customers are, but not why they act, and not what they actually want from you when something goes wrong. This playbook is a four-layer self-audit—Demographics, Behaviour, Attitudes, Motivations—designed to help you spot which of those layers your current segmentation already covers, which ones it's missing, and what those gaps are quietly costing you: the segments that look healthy but are silently churning, the campaigns that work brilliantly for half your audience and bounce off the other half, the board questions you can't yet answer because your data only goes one layer deep.

Read it through and you'll have a clear picture of where your segmentation isn't doing the work you need it to—and what kind of work would close the biggest gap.

Your segmentation probably stops at Layer 1

Most do. Age, location, tenure, property type, income band—that's where most UK customer-insight operations plateau. It's not wrong. Demographics are the easiest data to collect and the easiest cut to explain to a board. They're just one layer of four.

If that's where you are, you're in plenty of company—and you probably already half-know it. The board asks why retention is moving in a segment your model doesn't really recognise. The campaign that worked brilliantly for half your audience bounces clean off the other half. A satisfied segment turns out to have been quietly churning for six months. Nothing's broken exactly. It's just that demographics describe who customers are without explaining why they act. The other three layers—Behaviour, Attitudes, Motivations—are where the why lives.

This is the trap we keep seeing: segmentation that ends up as reporting decoration—a colour on a chart, a row in a deck, a category that looks crisp on a slide but doesn't actually drive a different decision anywhere in the business. The four-layer framework in this playbook is what segmentation looks like when it stops decorating reports and starts shaping decisions.

Some sectors are already operating in those layers, often because their regulator has nudged them there. Ofgem commissioned GfK to build an attitudinal segmentation of energy consumers—not demographic, attitudinal—and published "golden questions" suppliers can use to place their own customers into segments. The Financial Conduct Authority's Consumer Duty treats behavioural data—what customers actually do—as primary evidence that firms are delivering good outcomes. The Housing Ombudsman frames tenant stigma as relational, not demographic—a stat from the G15 (London's largest housing associations) found 43% of residents say interactions with their landlord are the principal source of it. Different sectors, different reasons, same underlying move: out of Layer 1 and into the rest.

None of this is a case for throwing out the segmentation you've already built. Demographics are real, useful, and load-bearing where they connect to a service decision. The audit is about what happens when Layer 1 is asked to do work it can't—when the segments your team trusts are quietly hiding the variance the other three layers would show.

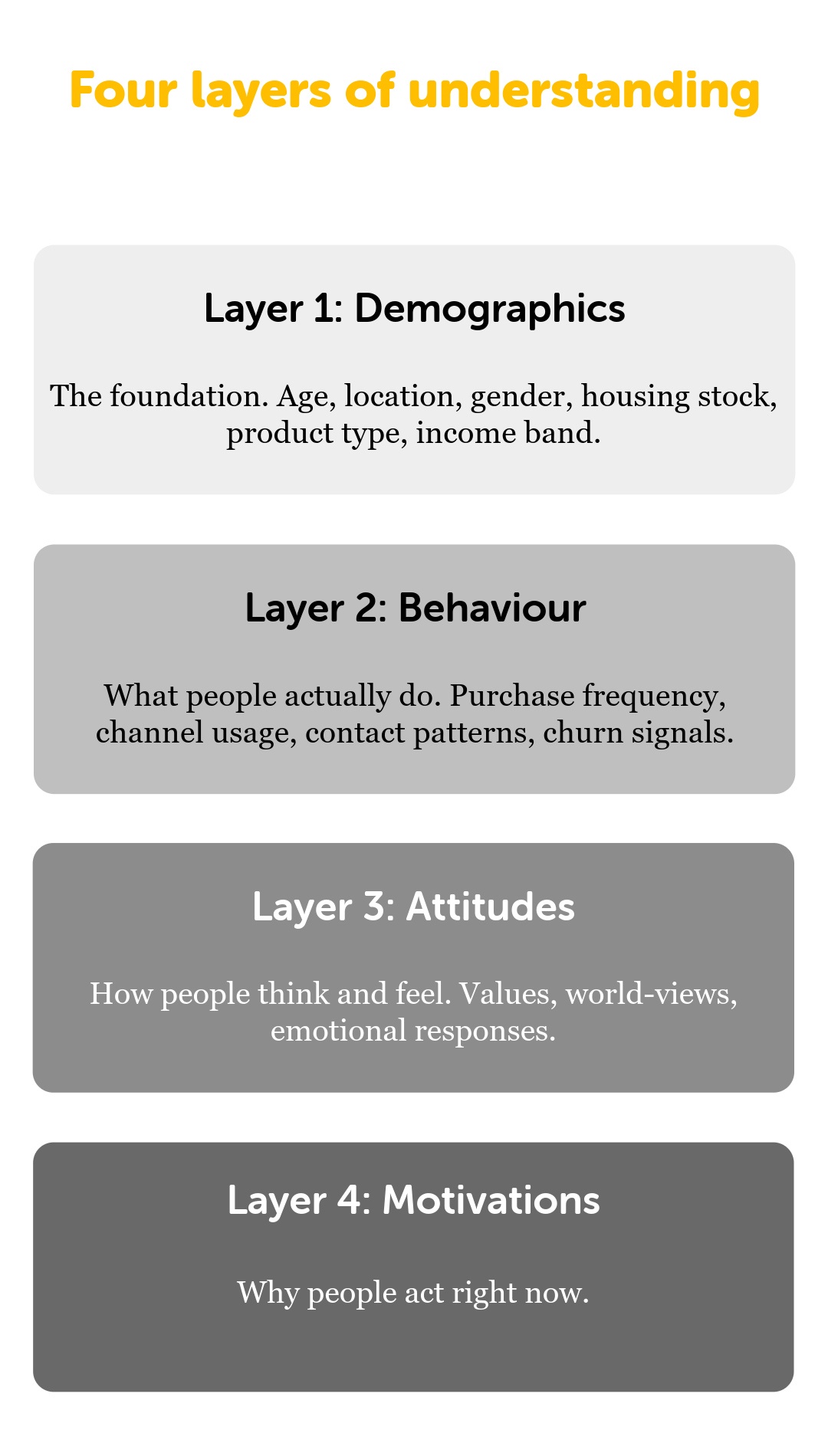

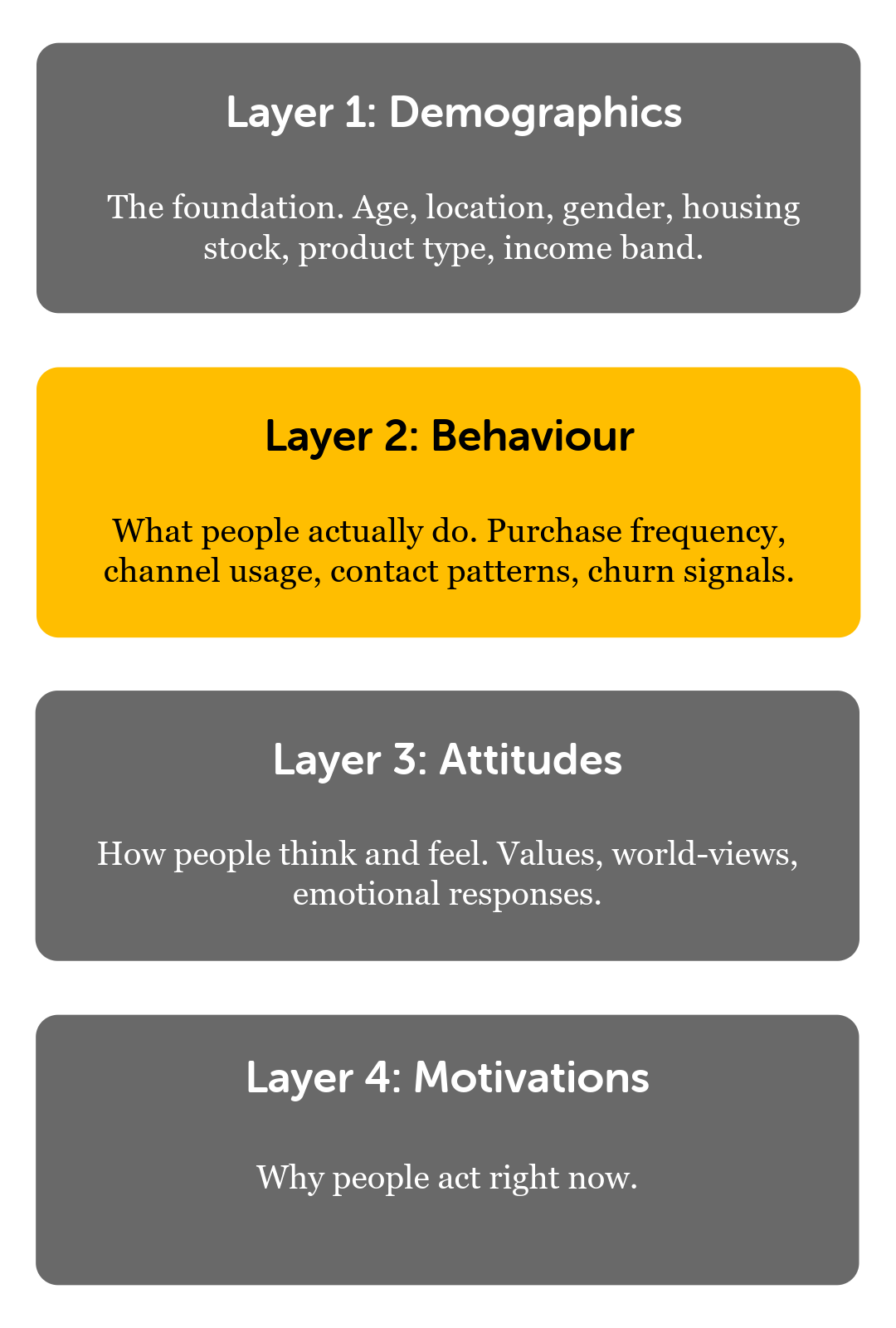

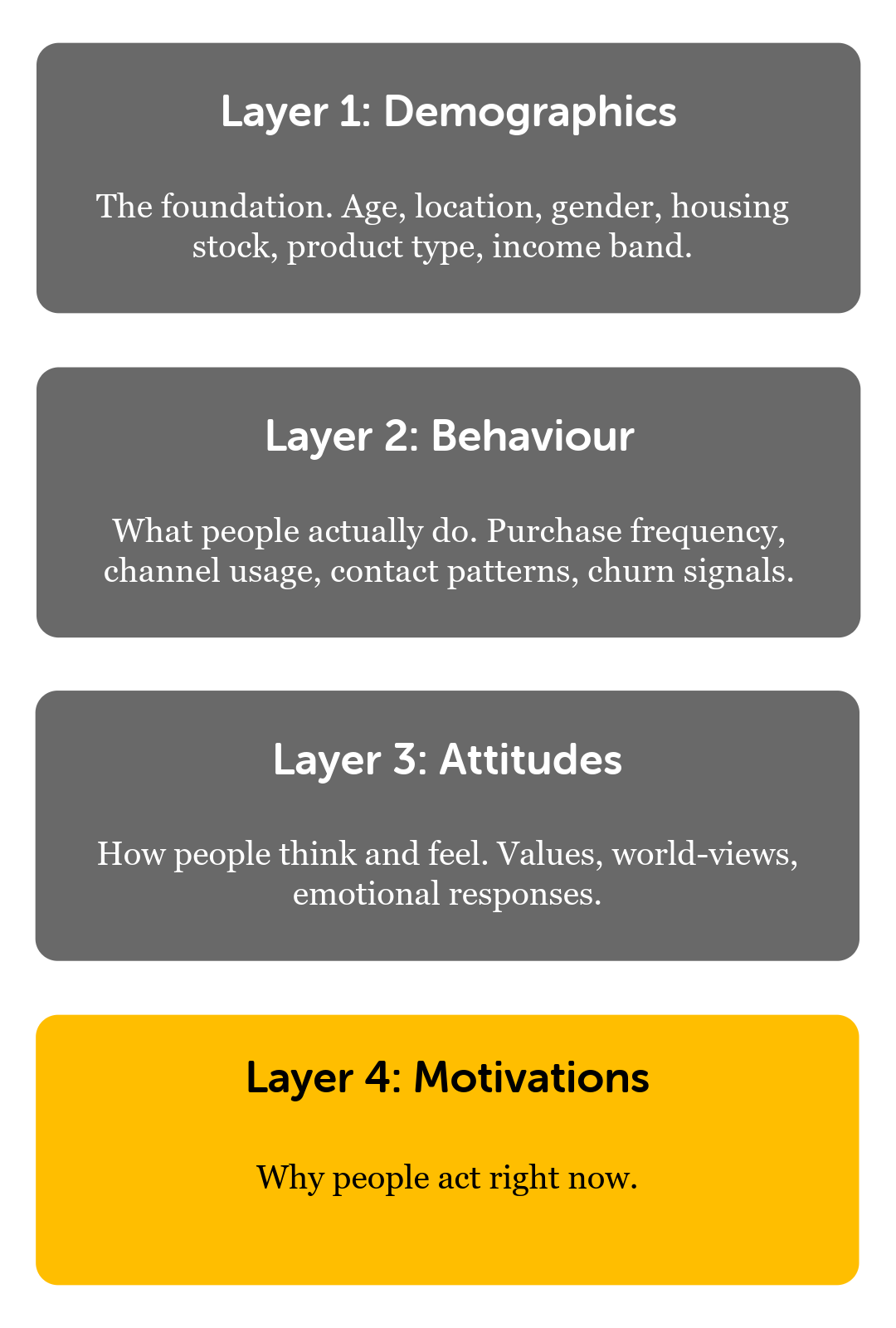

If you're not segmenting using techniques like these yet, this playbook walks you through it. Four layers, in order:

- Layer 1—Demographics (who your customers are)

- Layer 2—Behaviour (what they actually do)

- Layer 3—Attitudes (how they think and feel)

- Layer 4—Motivations (why they act now)

Each layer has the same shape: what it is, the evidence for why it matters, the specific red flags that tell you you're missing it, and one concrete next action you could start next week.

Let's start with Layer 1.

.png)

Layer 1. Demographics: who your customers are

.png)

Foundations

Demographics are the oldest layer of the four and the one with the longest administrative history. Those five-year age bands every UK research team defaults to (18–24, 25–34, 65+ and the rest) come from census convention—not because anyone ever proved that people inside a band share meaningful attitudes or behaviours. The 65+ threshold in particular is a pension-policy artefact dating to the Old Age Contributory Pensions Act 1925. Market research inherited the bands because they matched census denominators. We've been using them ever since because everybody else does.

Modern gerontology draws a sharper distinction than any census band does. It separates chronological age (years since birth), functional age (the physical, cognitive, and social capabilities someone actually has), and subjective age (how old a person feels and the identity they claim). For most CX-relevant outcomes—whether someone can use a self-service channel, whether they'll change provider, whether they'll complain when something goes wrong—functional and subjective age predict better than chronological age. Chronological age is the variable you have. The other two are the ones you want.

.png)

None of this means demographics are useless. Property type in housing genuinely drives different service experiences. Journey purpose in rail genuinely shapes satisfaction expectations. Income decile genuinely predicts tariff-plan fit. The trap is treating demographics as the primary axis of segmentation when the outcome you're trying to predict is behavioural, attitudinal, or about what a customer actually needs from your service.

Health check

Don't worry about ticking everything off—this is one layer of four, not a maturity scorecard. The more of these are true for you, the more confident you can be that demographics are doing real work, not just decorating reports:

-

Each demographic cut you use leads to a different service decision somewhere—not just a different colour on a chart

-

Where you're using a wide band (any age band, an income range, a tenure category), you've checked whether people inside it actually behave similarly, instead of assuming they do

-

For any demographic segment, you can name three things you assume about those customers beyond their demographics—and you've checked at least roughly that those assumptions hold

Red flags

The "65+" trap is the clearest demographic-layer failure mode. Zoe captured this in the Smart Segmentation webinar: her newly-retired dad spends his days on the golf course and walks 10k a day. Her 91-year-old gran watches golf from the sofa and wanders a hundred metres down the street to say hello to her neighbours. Same age band. Completely different people, with completely different service needs.

The bigger picture lines up with what Zoe described. The English Longitudinal Study of Ageing—a long-running UK study tracking people aged 50+—shows that within any five-year cohort above 65, people sit at very different points on cognition, self-rated health, day-to-day capability, and wealth. The spread inside a band overlaps almost entirely with the bands either side of it. ONS healthy-life-expectancy data lands the same point: the gap between the most and least deprived English regions, just within the over-65s, is wider than the average difference between adjacent five-year age bands. In practical terms: two of your "65–74" customers can need genuinely different things from you, and the band on its own won't tell you which is which.

The Housing Learning and Improvement Network has argued for over a decade that designing services around age thresholds gets it wrong in both directions at once. On one side, plenty of over-65s in general-needs housing have functional needs—mobility, cognition, isolation—that the standard service model wasn't built to spot. On the other, plenty of residents in age-designated older-person schemes are fitter, more independent, and more digitally confident than the support model assumes. Both groups end up in the wrong service for them. Inside Housing has covered the sector-wide shift away from age-based older-person service models toward needs-based allocation, driven partly by Regulator of Social Housing consumer-standard pressure.

The critique isn't 65+-specific. Even the standard 10-year bands hide a lot. The 25–34 band collapses students finishing courses, early-career renters in shared housing, young parents, and first-time buyers into one unit. The 45–54 band stretches from school-age parents to empty-nesters, peak-earning to redundancy, mortgage-paying to mortgage-free. JRF's work on young-adult housing precarity has shown bimodal patterns within bands that any single-number cut hides.

If your segmentation uses age bands as a primary axis for predicting behavioural or attitudinal outcomes, the variation inside the bands is very likely doing more predictive work than the difference between them. And you can't see it because you're looking at the average.

Next action

Pick a demographic segment your team treats as fairly uniform—an age band, a tenure type, an income band, a property type, whatever's currently load-bearing in your segmentation. Then test the assumption a couple of ways:

-

Pull the last campaign or service interaction targeted at that segment. Did everyone in it respond the same way? Where the response, satisfaction, or complaint pattern splits within the segment, that's variance the band is hiding.

-

Ask the colleague closest to those customers (a frontline team, a journey owner, an account manager) to describe three real examples from that segment. One group, or three?

-

Run a single attitudinal pulse on a sample of the segment—something like "how confident are you that you can resolve an issue with us yourself?"—and look at the spread within the segment, not the average.

If any of these surface clear splits, Layer 1 is doing less predictive work for you than it looks like it is—and that's the opening Layers 2, 3, and 4 are designed to fill.

Layer 2. Behaviour: what your customers actually do

Foundations

Behavioural segmentation classifies customers by what they actually do: what they bought, how often, through which channel, how they engaged with communications, how they complained, how long they stayed. In our work with feedback teams across various industries—including housing, utilities, retail, finance, travel, and hospitality—this is the layer most organisations already have the data for, and the layer they most systematically underuse.

A handful of well-established frameworks underpin it:

-

RFM (Recency, Frequency, Monetary value)—first used in 1960s–70s direct marketing, since refined into probability models that estimate who's about to come back, who's drifting, and who's already lost.

-

Engagement cohort analysis—borrowed from SaaS. Groups customers by when they first engaged and tracks how engagement decays from there.

-

CLV tiering—segments by predicted customer lifetime value.

-

Unsupervised clustering—instead of telling the model which segments to look for, you let it surface the natural groupings in the data and then judge whether those groupings are useful. The algorithms underneath (k-means and similar) handle the maths; the conceptual move is letting archetypes emerge from the data rather than imposing them on it.

What they all have in common: they need transactional or interaction data your organisation almost certainly already has. That's why this layer is high-volume, continuously updating, and nearly free for anyone already running operational systems—it sits usefully between demographics (stable, low-resolution) and attitudes (high-resolution, drawn from surveys and from open-text feedback, complaints, contact-centre transcripts, and chat logs—anywhere customers describe what they think).

Health check

Most teams we talk to spot themselves on this list somewhere partway down, not all the way at the bottom—so don't sweat full ticks. Look for direction of travel rather than perfection:

-

You can name a handful of behavioural signals your service generates that you actively use, not just collect

-

Your behavioural cuts lead to different actions for different segments somewhere in the business, not just different lines on a chart

-

At some point, someone has eyeballed whether the behavioural cut predicts outcomes better than demographics alone—formally or back-of-envelope

-

You watch at least one or two signals that predict customer change (leading indicators), not only signals that confirm it after the fact (lagging indicators)

Red flags

Behavioural-only segmentation hits a ceiling—and most teams discover it on their own data, the hard way. The first half of the journey is genuinely useful: across marketing, banking, and telecoms, models built on what customers do (their transactions, their channel choices, their complaint patterns) predict churn and retention by a meaningful margin over models built on who they are. So far, so good.

Then it stops improving. You can keep adding behavioural features—new channels, new transaction types, more granular usage data—and the model just doesn't sharpen. The customers it couldn't explain last quarter still aren't explained this quarter. The churn it didn't predict last year, it still doesn't predict this year either. The temptation at this point is to throw more behavioural data at the problem; it rarely helps. Worse, it's the moment behavioural segmentation drifts into reporting decoration—still on the slide, still being refreshed quarterly, no longer driving anything new.

The ceiling has a specific shape. Two customers can look identical on every behavioural measure you have—same transactions, same frequency, same channels—and end up on completely different satisfaction, advocacy, and churn paths. Two bank customers with identical RFM scores can hold radically different levels of trust in the institution. Two tenants with identical repair-request frequency can have opposite satisfaction trajectories. Two rail passengers with identical journey patterns can carry very different complaint propensities. The customer-analytics reviews from the last decade keep surfacing the same pattern: behaviour explains a lot, but it stops explaining at the moment two people behave the same way for completely different reasons.

It produces two characteristic mistakes:

-

Silent dissatisfaction. Customers whose behaviour looks healthy (regular usage, stable spend, no complaints) but whose attitudinal state has been quietly deteriorating for months. They leave without warning or stop recommending you without ever telling you why.

-

Vocal loyalists misread as risks. Customers whose high complaint frequency or channel-switching makes them look like flight risks, when actually they're engaged advocates whose complaining is a form of investment in the relationship. Push them through a retention programme and you'll lose the people most committed to seeing you improve.

Both errors reverse the moment attitudinal data is layered in—which is the bridge into Layer 3.

Some regulators are already working through this in real time. The FCA's Consumer Duty framework treats behavioural data—product switching, complaint rates, arrears emergence—as primary evidence of customer outcomes, while explicitly acknowledging that behaviour is a limited proxy for vulnerability. Ofgem does similar with energy customers, prioritising behavioural indicators (payment-method change, consumption-pattern change, contact-channel change), but has documented that behavioural-only proxies systematically miss customers whose circumstances have changed without their behaviour catching up yet. The regulators are saying out loud what behavioural data alone can and can't see.

In housing, the Housing Ombudsman's Spotlight reports identify a consistent set of tenant-level behavioural signals that predict complaint escalation months in advance—repair-request frequency and re-raise rate, complaint escalation pattern, contact-channel choice, survey-response behaviour. The same reports also warn that silent dissatisfaction goes under-detected in tenants whose behavioural profile is stable. Watching the behaviour catches the loud cases. It doesn't catch the quiet ones.

Next action

Pick a behavioural segment your model treats as homogeneous—your "high-churn-risk" group, your "engaged" group, your "lapsing" group, whichever is currently load-bearing for you. Then look inside it:

-

Pull two or three real customers from the segment and trace their actual story. Same behaviour pattern, same reason underneath? Or does the same behavioural footprint cover genuinely different motivations to leave (or stay)?

-

Run any open-text feedback you already hold for that segment through whatever analysis you've got. If themes split within the segment, you've found variance the behavioural cut is hiding.

-

Compare two recent retention campaigns that targeted the same behavioural segment with similar offers. Did they perform similarly? If one beat the other clearly, the variance is between the customers, not the offers.

If the inside of a segment doesn't hold together, your behavioural model is naming the symptom, not the cause—and that's where Layer 3 starts to earn its place.

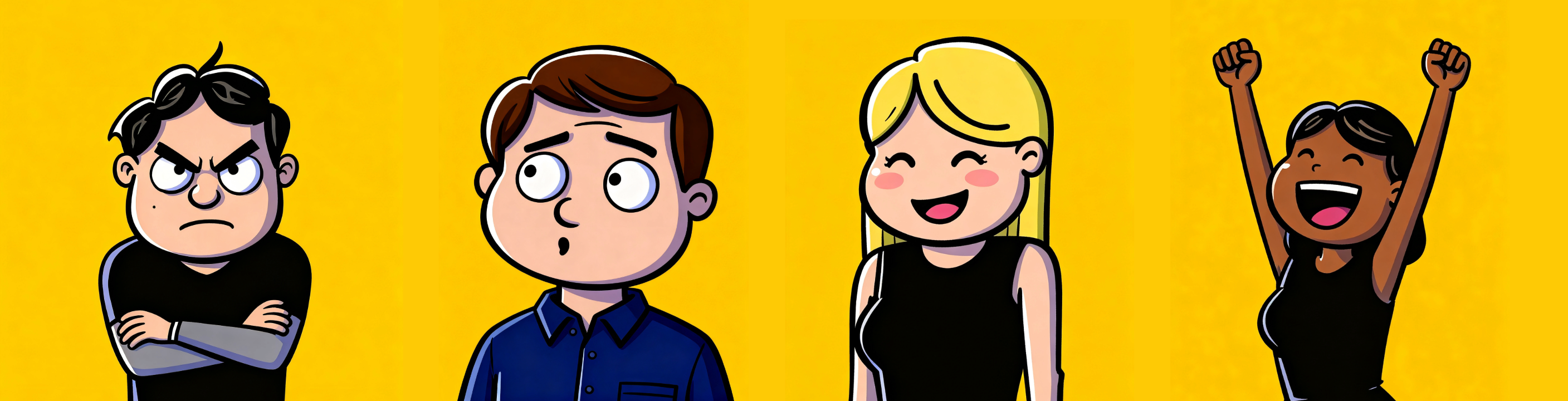

Layer 3. Attitudes: how your customers think and feel

.png)

Foundations

Attitudinal segmentation is where the predictive ceiling of Layer 2 gets broken. Values, worldviews, emotional associations, beliefs about the sector, trust in the provider—the stable dispositions that explain why two customers who look identical on every demographic and every behavioural measure end up on opposite satisfaction trajectories. This is also—we'd argue, biased as we obviously are—the layer where the stuff customers say in their own words starts pulling its weight.

There's a long-standing tradition in UK research—from Values Modes through Schwartz's basic values to NatCen's British Social Attitudes survey—that takes attitudes seriously as a way of understanding people. They differ in scope, method, and audience, but they share an underlying claim: attitudes, values, and beliefs vary systematically within populations that look uniform on demographic data, and that variation is measurable, stable enough to act on, and meaningfully independent of demographics. That's why a tenure- or age-based segmentation can never approximate an attitudinal one.

Health check

This is the layer most CX teams have least of, so the bar here is genuinely lower than the others. Even rough early progress counts:

- You hold some attitudinal data on your customers, not just behavioural or demographic—even if you haven't formalised it into named segments yet

- When you look at open-text feedback, you're at least sometimes looking for what people feel and believe, not just which topics get mentioned or whether the sentiment came out positive or negative

- You can place your customers into at least two or three rough attitudinal groups, with some evidence behind the cut—even if it's two groups, not twelve

- You've at least sense-checked whether those attitudinal groups predict outcomes (satisfaction, loyalty, advocacy) that demographics alone can't

Red flags

The most common red flag is the "everyone in my sector wants the same thing" objection. We heard it directly during the Smart Segmentation webinar: if customers in a regulated sector all need the same functional thing—affordable housing, gas supply, punctual trains—can attitudinal segmentation meaningfully differentiate them?

The evidence says yes. And in regulated sectors, the regulators are doing it already.

Ofgem commissioned GfK in 2017 to build an attitudinal segmentation of energy consumers "to help better understand consumers' underlying barriers to and drivers of engagement," and published "golden questions" so suppliers, consumer bodies, and downstream researchers could place their own customers into the segments. Alongside, the Centre for Sustainable Energy maintains a set of 24 consumer archetypes (refreshed in 2024), explicitly designed to surface vulnerable consumers whom a pure income-decile cut would miss.

Ofwat frames affordability uptake in explicitly attitudinal terms. The regulator notes that only a third of bill payers know financial support is available, and that customers in vulnerable circumstances are "often unlikely to reach out for support, which could be because of feelings of shame, denial or helplessness." Shame, denial, helplessness—attitudes, not demographic attributes. Two customers with identical income, household composition, and region can sit at very different points on that axis. And the axis is what predicts whether they actually apply for the WaterSure or social tariff they're entitled to.

Housing is the sector with the most visible attitudinal gap. The Housing Ombudsman's severe maladministration reports consistently frame the core failure as one of attitude and communication rather than technical performance. A piece of research from London's largest housing associations referenced in Ombudsman work found 43% of residents identified interactions with their landlord as the principal source of stigma. CIH's guide to tackling stigma in social housing reaches the same diagnosis from the professional-standards angle: "negative assumptions about tenants are reflected in how they are treated." No UK social housing provider has yet published a case study using Values Modes segmentation in the open literature—which is itself a finding. The framework is mature and UK-native; the sector hasn't applied it yet. The precedents in energy and rail suggest that when a regulated sector does commission this work, the regulator tends to be the one commissioning it.

The shorter version: in regulated sectors, the regulators have already broken ground on the attitudinal layer. Whether you're inside a regulated sector or not, the same principle holds: any population that looks uniform on demographics and behaviour is hiding meaningful attitudinal variation that you can only see if you go looking for it.

Next action

You don't need a fresh research project to start exploring this layer. The substrate is whatever customer-language you already have—open-text survey responses, complaint narratives, contact-centre notes, chat logs. The exercise is to read through a batch of it with attitudinal patterns in mind: trust, fairness, control, dignity, hope, frustration. Where do those repeat? Where do they cluster? Where do you find variation you weren't expecting?

If you're in a sector where the regulator has already done foundational attitudinal work—energy, water, housing—their published material can sit alongside your own data as a useful reference point.

The aim isn't a finished segmentation in week one. It's noticing that the variation your demographic cuts can't see is already in your data, written in your customers' own words.

Layer 4. Motivations: why your customers act now, in this moment

Foundations

Where attitudes describe stable dispositions, motivations describe the reason someone acts in this moment. Motivations shift with life events, news cycles, service incidents, and how salient a particular concern feels today. A customer whose attitudes haven't budged in five years can have a motivational profile that moves week to week. This is the layer where the question stops being "who is this customer?" and becomes "what are they trying to do right now?"

There's a body of well-known business and marketing literature on what motivates customers to act—different lenses for different sectors—and most CX leaders have come across at least some of it. The point we want to make here isn't which lens. It's that the right one for your sector is usually different from the one for someone else's sector, and the metric you're using right now might be pointing at the wrong thing for your customers altogether.

Health check

Hardly anyone has all of this in place. Realistically, this is the layer where you're aiming to do some of it on purpose rather than zero of it by accident:

- You measure something about what motivates action now, not only what people think in general

- You've at least picked a motivational lens that matches your sector's customer relationship (more on which lens fits which sector in Red Flags), even if you're not yet measuring against it

- You can name a couple of motivational drivers in your sector that don't appear anywhere in your current CX metrics

- You've got some way—even informal—of spotting when a customer's motivational state has shifted, not only when their behaviour has

Red flags

The clearest motivational-layer failure mode is the one-size-fits-all CX metric trap: using the same headline metric (NPS, CSAT, sometimes both) across sectors whose customers are motivated by completely different things. You end up with noise where you should be getting signal, and you can't always work out why.

Different sectors don't all motivate the same way. Some are about minimising friction—getting somewhere on time, resolving a problem quickly, finding what you needed without effort. Some are about quality of experience—memorability, personal engagement, the feeling of being valued. Plenty of sectors sit somewhere in between, where reliability is the baseline and how customers feel treated when something goes wrong is the bit that drives loyalty.

UK regulated sectors illustrate the range. UK rail's measurement apparatus—Transport Focus's National Rail Passenger Survey and the ORR's Rail User Survey—reflects the friction-minimising end. The Regulator of Social Housing's Tenant Satisfaction Measures reflects the in-between zone—12 measures that span both the transactional (repairs, safety) and the explicitly relational (keeping tenants informed, treating fairly and with respect, listening and acting on views, handling complaints).

The red flag to look for in your own organisation: if your sector sits in the in-between zone but you're measuring it with a single friction-dominant metric (NPS or CES on its own), you'll be measuring the reliability half reasonably well and missing the dignity-fairness-voice piece almost entirely. That's the half that drives complaint escalation in housing and regulated utilities, and energy-policy research finds repeatedly that being treated fairly predicts complaint escalation and regulator contact better than the absolute service-failure rate. Customers tolerate a fair amount of imperfection if the handling feels fair. If your segmentation doesn't surface that, you're measuring the wrong thing for your sector.

Next action

Try one of these:

- Look at your current customer-experience dashboard. Which of the things that motivate your customers—effort, fairness, voice, dignity, memorability—actually show up in the metrics you report? What's missing, and what are you doing today instead of measuring it?

- Pick the headline metric your team most relies on (NPS, CSAT, CES, an internal score). Compare what it's measuring against what your customers actually care about in your sector. Where does the metric stop short?

- If you could only measure three things about customer motivation in your sector, what would they be? Are any of them measurable today? If not, what would it take?

The honest version of any of these tends to surface where Layer 4 isn't being captured at all.

Limited Time Offer : The Smart Segmentation Report

If running the four-layer framework against your own customer data sounds more useful than working through this solo, that's what the Smart Segmentation Report is for.

We take the feedback you already have (surveys, complaints, contact-centre transcripts, chat logs), run the framework against it, and walk you through what each layer shows in your data, with segments, evidence, and a theme bank your team can keep using afterwards.

What this needs to actually work

The substrate for all of this is whatever customer-language data you already have—open-text feedback, complaint narratives, contact-centre transcripts, chat logs. You don't need new platforms; you need to look at what's already there through an attitudinal and motivational lens.

That's where Wordnerds comes in—obviously we have skin in this game—but reading 50,000 open-text comments by hand is the kind of work that's perfect for software and miserable for humans. The framework in this playbook works whether you use us or pick another tool entirely; we're just one of the more effective ways to run it once the data outgrows what your team can sensibly read.

If you've worked through Layers 1–4 and you can already see gaps that matter, that's the moment our Smart Segmentation Report is built for. More detail at the bottom of the page ↓

When segmentation becomes how decisions get made

The shift

Running a four-layer audit is a useful exercise in its own right. The much bigger return only shows up when segmentation stops being something one team owns and starts being the framework that every team makes its decisions inside. From deliverable to infrastructure. From a thing the insight team produces to the language every other team uses. The change that compounds isn't technical—it's in how people talk about customers, in meetings, in briefs, in handovers.

Six months from now, this looks like…

Concretely, here's what changes once segmentation is doing real work:

- The board asks why retention is moving in a particular segment, and you can answer the question instead of caveating it. The segment explains the movement, not just describes it.

- Marketing and service stop arguing about which group needs what. Both sides are using the same segment names for the same people, and disagreements become about strategy, not definitions.

- The complaint pattern that used to surprise you is now one of the early-warning signals you actively watch.

- A "satisfied" group that quietly churned out from under you last year doesn't quietly churn this year—because you've stopped trusting "satisfied" as a single category, and the attitudinal layer is telling you which kind of satisfaction is which.

- When you make a campaign decision, an investment decision, a service-design decision, the audience rationale is legible to everyone in the room. We're spending this on these people for this reason. Defensible because it's documented, not because someone senior insisted on it.

None of this is a finished state. What you're aiming for isn't "segmentation, solved"—it's "segmentation, in use."

What becomes possible

When demographics, behaviours, attitudes, and people's own words come together in one framework, segmentation stops being about categorising people and starts being about understanding them—what they need, what they want, and what your organisation can actually help them with. It stops being reporting decoration and becomes part of how decisions get made every day.

That's the promise. And the line worth keeping in mind through every layer of the audit:

People don't disappear into averages.

What the Report does

We work with the customer feedback you already have—open-text survey responses, contact-centre transcripts, complaints, chat logs, whichever channels you have flowing—and run the four-layer framework against it. You come away with a clear picture of what each of the four layers shows in your data, plus the artefacts to keep using afterwards.

Specifically, in 30 days, you get:

-

The Report. A written deliverable that walks through what each of the four layers shows in your data, with segments, evidence, and recommendations.

-

Three deep dives. Specific findings we go deeper on—either across layers (e.g. an attitudinal segment showing up in your behavioural data) or within a single layer where there's enough variance to be worth digging into. Got specific questions you've been wanting to answer? "Why does Segment X churn at twice the rate of Segment Y?" Those count as deep dives too.

-

A theme bank. The themes we surfaced in your data, in a format your team can keep using as new feedback flows in—the segmentation stays current without us in the room.

-

A Power BI dashboard. Live, interactive, and yours to keep. Your team can carry on cutting and watching the segments after we hand over.

-

A workshop with your team. We sit down together, walk through the findings, and embed the work in the people who'll be using it day-to-day. The dashboard makes the segmentation discoverable; the workshop makes it operational.

Proven, documented, ready for your team to run with afterwards.

Who you'd work with

Helen and Sarah are your first point of contact—the people you'd talk to about scope, fit, timeline, and whether the Report shape is right for your team.

Our I&I (Insight & Innovation) team are the people actually running your data through the framework. They build the segments, run the deep dives, and put the dashboard together.

Special offer running now

Launch price: £4,900 + VAT (usually £9,600 + VAT).

Register your interest and we'll be in touch to talk through how it works.

We'll come back to you within two working days with a short call to understand the gap you'd want to focus on and confirm whether the Report shape is the right fit. No discovery-call marathon. Just a conversation to check fit and scope.

Not quite ready?

When the time comes to close a specific gap and you'd rather not do it solo, we're all ears. In the meantime, our CX Corner newsletter is the easiest way to keep the conversation going.

Limited Time Offer : The Smart Segmentation Report

If you've worked through the self-audit and there are layers you don't feel you're doing a great job on, we genuinely think we can help.

The Smart Segmentation Report is what we offer for exactly that: we run the four-layer framework against your existing customer data, surface what's in there, and walk you through the findings, the segments, and the theme bank your team can keep using afterwards.

%20(1).png?width=660&height=165&name=Wordnerds-Logo-Yellow-and-White-On-Transparent-(RGB)%20(1).png)

.png)